If you enjoyed this clickbait listicle please like, share, and subscribe with your email, our twitter handle our facebook group here, or the Journal of Immaterial Science Subreddit for weekly content. This paper introduces the circuit design details of the swish activation function and its derivative implemented using RRAM devices and passive components. Don’t just go to the touristy bourbon street NOLA but the jazz bar speckled Frenchman street NOLA. Example Jacobian: Elementwise activation Function. The first and second derivatives of TanhExp, Swish, and Mish Source publication +9 TanhExp: A smooth activation function with high convergence speed for lightweight neural networks Article.

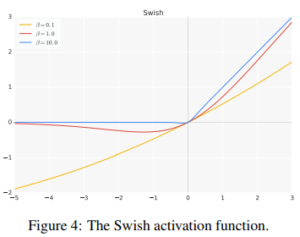

I extended this to mish and found that x.Relu5 (x+3)/5 also seems to be a good approximation for mish. If you want the tastiest, most fun, freedom loving, jazzy part of America, take a weekend to NOLA. Its gradient is a vector of partial derivatives with respect to each input. Hard-mish activation function Deep Learning rolo (Andrew Rowlinson) November 30, 2019, 9:44am 1 I recently read the mobilenet v3 paper and they used an approximation for swish called hard-swish: x.Relu6 (x+3)/6. For instance, the GELU (Gaussian error linear unit) activation function x ( x ) (Hendrycks and Gimpel, 2016), where ( x ) is the standard Gaussian. Tanh is basically like the other American sigmoid princess Pocahontas except just better with those larger derivatives. Swish, Mish and Serf belong to the same fam-ily of activation functions possessing self-gating property.Like Mish, Serf also possess a pre-conditioner which resultsin better optimization and thus enhanced performance. Google brain invented an activation function called Swish and defined as f(x) xSigmoid (x). Nowadays, there are many activation functions, but the well-known is the rectified linear unit (ReLU). The discovery of Mish was inpired by Swish and was found by systematic analysis and experimentation over the characteristics that made Swish so effective 1.

Yeah she falls into that whole obsessed with a prince trope most of the Disney Princesses have but lets be honest, she’s Disney’s first and only (I hope not for long) Cajun Princess which makes her quite unique. We dene Serfasf(x) xerf(ln(1 +ex))whereerfis the error func-tion (1998). Activation function is the heart of the neural network and its impact is different from one to another. Tiana is a go with the flow natural log loving, jazz playing New Orleans princess.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed